• Purpose. This Policy addresses the use of Generative Artificial Intelligence (as defined below) at Flynn Hodkinson Ltd. (“FH”). Generative Artificial Intelligence is a rapidly evolving technology that can bring great value to our efforts. However, its legal and technical risks are not well-known, and its potential for unintentional misuse merits a consistent policy-based approach to using this technology within our environment.

AI Policy

• Scope. This Policy applies to all members of the Flynn Hodkinson Workforce. The term “FH Workforce” means FH employees, as well as independent contractors, interns, volunteers, and other individuals who are appointed or engaged to provide services or create work product on FH’s behalf.

This Policy does not apply to activities outside the scope of one’s work for Flynn Hodkinson unless such activities adversely affect the confidentiality, security, or reputation of FH.

This Policy covers all company systems, devices, technologies, and platforms, including software applications, hardware devices, cloud platforms, mobile applications, and any other technology interfaces.

• Definition of Generative Artificial Intelligence. For purposes of this Policy, the term “Generative Artificial Intelligence” or “GAI” refers to an artificial intelligence tool that is designed to produce new, previously unseen, data in response to human input using predictive algorithms applied to an underlying database of training data and may include systems that generate new images, text, sounds, or other data types in response to human input.

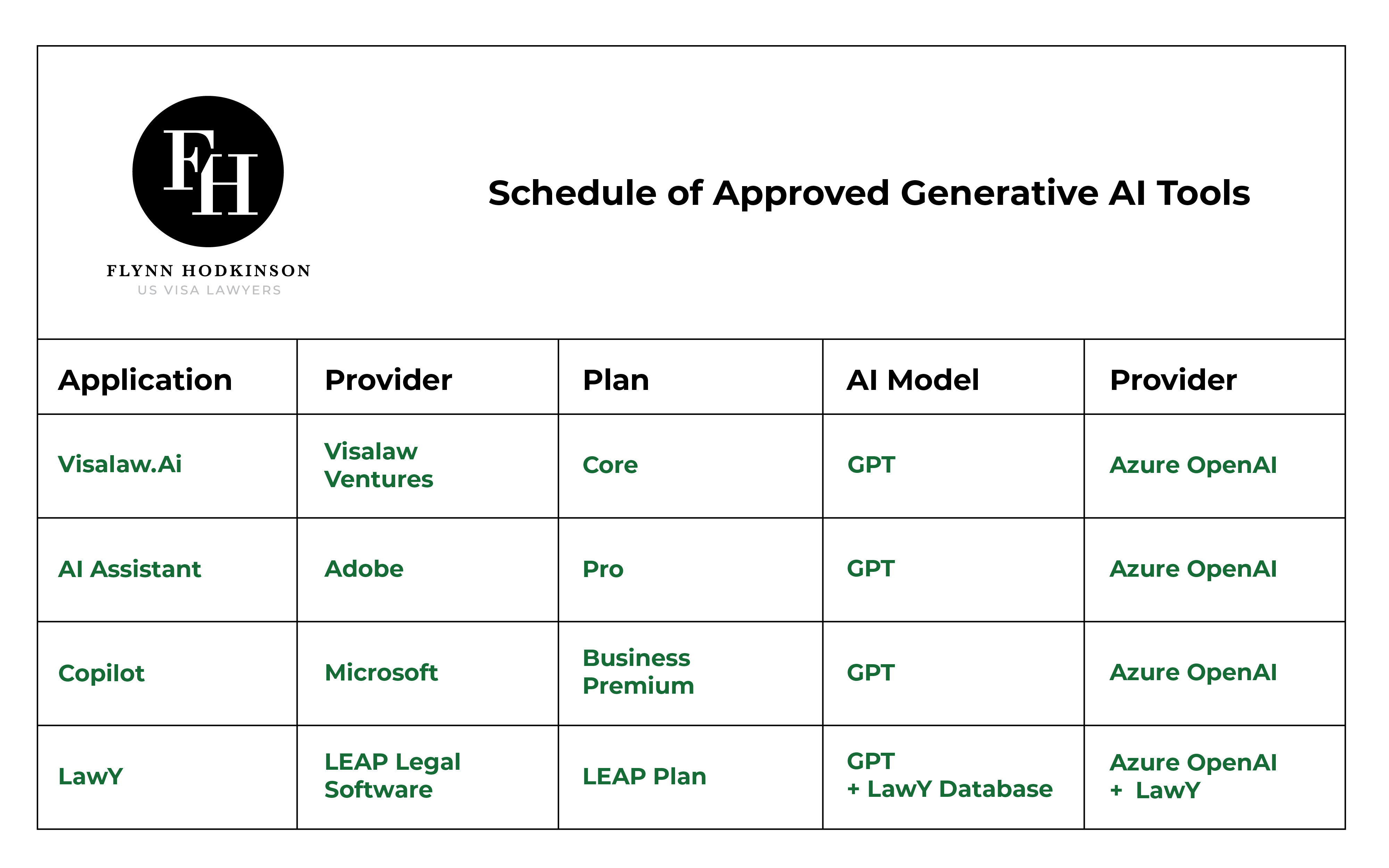

• Approved GAI Tools. Flynn Hodkinson may designate one or more GAI tools as approved for use by the FH Workforce (each an “Approved GAI Tool”). The Schedule of Approved GAI Tools is posted in addendum to this policy, at the bottom of this page. Only Approved GAI Tools may be used on FH devices or to process FH information (including FH client information).

• Professional Responsibility. Members of the Flynn Hodkinson Workforce who use Approved GAI Tools may do so solely as supplementary instruments, such as to generate ideas or assist in the review or preparation of documents. While GAI can serve as a valuable tool, ultimate responsibility for the accuracy, relevance, and appropriateness of any final work product rests solely with the FH Workforce member using the Approved GAI Tool.

• General Rule. GAI Tools are prone to generate fictitious case and statutory citations (a phenomenon often referred to as a “hallucination”). Accordingly, except as provided in Section 5(b), it is imperative that all outputs derived from Approved GAI Tools be meticulously reviewed, validated, and edited as necessary by the user before finalization. Use of Approved GAI Tools should not substitute or diminish the critical thinking, judgment, and expertise that our FH Workforce members bring to their respective roles.

• Exception for Mass Data Processing. Where the value of using an Approved GAI Tool would be diminished by the requirement that all GAI outputs be meticulously reviewed and validated, FH may grant case-by-case exceptions to Section 5(a). For example, FH might use an Approved GAI Tool to search or summarize large datasets that cannot reasonably be validated by humans or even traditional computer algorithms. In this example, requiring the output of the GAI process to be reviewed and validated by humans might defeat the purpose of using the GAI tool.

• Client Consent for Allocation of Responsibility. In such cases where FH cannot reasonably exercise full professional responsibility for the GAI output, the client’s informed written consent shall be obtained as described in Section 6(c). The responsible attorney should consult the respective (to their own bar organisation) equivalent of Illinois Rule of Professional Conduct 1.2 when structuring a matter in this way.

• Client Rights. Our clients have a right to be informed about how Flynn Hodkinson uses GAI tools to process their matters and confidential information. This section outlines the rules for informing clients about our use of GAI tools. This section also describes when and how clients may opt out of our use of GAI tools to process their matters and confidential information. This section also describes the circumstances under which we are required to obtain a client’s informed written consent prior to processing their information using a GAI tool.

• Notice of Artificial Intelligence Practices. At all times after implementation of this Policy, FH will maintain a current and accurate Notice of Artificial Intelligence Practices describing how FH uses GAI tools and safeguards client confidential information. This Notice should be clearly posted on the FH website. Clients should be provided with this Notice as part their engagement documents, and within a reasonable time after we make any material changes to our policies and procedures for using GAI tools. No information related to client matters or confidential information should be processed in GAI tools prior to the client receiving this Notice.

• Client Opt-Out Rights. Except as provided in Section 6(e), Clients have the right to restrict or prohibit the use of GAI tools in connection with their matters or to process their confidential information. A client may exercise this right at any time by providing written notice. Upon receipt of such notice, the client’s preferences should be clearly and prominently documented in the client file. All FH Workforce members on the file should be notified of the client’s opt-out and take immediate steps to ensure that GAI tools are not used in any manner that violates the client’s expressed restrictions.

Accordingly, unless a client has opted out, members of the FH Workforce may use Approved GAI Tools for the following purposes:

• De-Identified Processing. Approved GAI Tools may be used as a work assistant without inputting any personally identifiable or client confidential information. This could include posing hypothetical questions to an Approved GAI Tool to help generate a checklist for drafting documents.

• Non-Sensitive Confidential Processing. Approved GAI Tools may also be used to process client identifying or confidential information other than Sensitive Personal Information as defined in the next section. For example, a document may be uploaded to an Approved GAI Tool to support efficient review of that document, provided any Sensitive Personal Information has first been redacted.

• Sensitive Personal Information. Prior to using an Approved GAI Tool to process Sensitive Personal Information, as defined below, FH must obtain the client’s informed written consent using the procedure described in Section 6(d). For the purposes of this Policy, Sensitive Personal Information includes:

• Social security numbers or tax returns

• Driver’s license numbers or state identification card numbers

• Account, credit card, or debit card numbers

• Medical information, including mental health or substance abuse treatment records

• Health insurance information

• Unique biometric identifiers or biometric information (as defined in Illinois law)

• Passwords and security questions and answers used to access computer accounts

• Any other information meeting PIPA’s definition of “personal information,” even if separated from a first name or first initial in combination with last name

• Informed Client Consent. Where client consent is required, it must be informed. The client must receive a clear explanation of the purpose of the processing, the type of data involved, the type of GAI tool to be used, the potential risks (including risks of disclosure or misuse), and the safeguards in place to protect the data. This information must be provided separately from other materials, and the client must be given the opportunity to ask questions before providing consent. Consent must be voluntary and may be revoked at any time and for any reason.

• Authority to Consent. The client may provide consent to process personal data that is (i) the personal data of the client or (ii) personal data of which the client is the lawful controller or processor. If the responsible attorney has reason to doubt the client’s authority to consent to the processing for the personal data provided, they must refrain from processing the data using GAI until appropriate authorization is confirmed.

• Discretionary Consents. The attorney responsible for any client or matter is free to require informed written consent for the use of Approved GAI Tools using criteria that is more restrictive than this Policy provides. If, in the professional judgment of the responsible attorney, informed written consent is prudent in any matter, then the responsible attorney’s judgment shall control.

• System-Wide Processing. “System-Wide Processing” means using GAI systems in firm-wide administrative, security, or operational tools as part of standard functions, such as document management, email filtering, cybersecurity, or automated billing. These GAI tools are exempt from client opt-out or consent rights described above.

• Implementation of Client and Project Level Restrictions. Prior to using Approved GAI Tools for any client or client project, the responsible attorney for the matter must complete an evaluation to determine whether any regulatory, contractual, or other specific obligations apply to the client or project. Approved GAI Tools may not be used unless such requirements are identified and complied with. For example:

• Client-Imposed Restrictions. Clients may include GAI-related restrictions in their agreements or engagement letters. As described above, clients may also file an opt-out request to limit or prohibit the use of GAI tools to process their data.

• Flow-Down Restrictions. Clients may be subject to GAI limitations that flow down to Flynn Hodkinson, such as public-sector procurement rules (e.g. the California State GenAI Procurement Guidelines).

• Data Protection Requirements. Certain client data may be subject to heightened privacy or security obligations that require additional safeguards around GAI use, including but not limited to the CCPA, GDPR, or HIPAA.

• Court and Regulatory Rules. Some courts or regulators may require the use of GAI tools to be disclosed, documented, or certified. These rules may require a party to: (i) identify which GAI tool was used in drafting a court filing; (ii) explain how the GAI tool was used in preparing court filings or regulated submissions; (iii) verify the accuracy of AI-generated content using non-GAI authoritative sources; and/or (iv) confirm that no confidential or proprietary information was disclosed to unauthorized parties. Accordingly, prior to using Approved GAI Tools to prepare any materials that will be used in a judicial or regulatory proceeding, the supervising attorney will confirm what GAI rules apply to that proceeding.

• Data Minimization. Each Flynn Hodkinson Workforce member must limit the retention of data in Approved GAI Tools to only what is reasonably necessary to support the project or matter for which the Approved GAI Tools are used. Approved GAI Tools should not be used as long-term storage or recordkeeping systems. For example, Workforce members should delete conversations, documents, prompts, instructions, memory functions, and other related data when the associated client matter is closed, or if no longer reasonably needed to manage that specific project or matter.

• Education and Training. Flynn Hodkinson will coordinate education and training for all members of the FH Workforce on the responsible use of artificial intelligence, including the requirements of this Policy. This training should include understanding ethical implications, data privacy and security risks, and the effective practical use of approved GAI tools and extensions.

• Intellectual Property. The output of a Generative Artificial Intelligence tool may not be subject to intellectual property protection. Accordingly, Generative Artificial Intelligence output may not be incorporated into materials intended to have intellectual property protection without specific review and approval on a case-by-case basis. Accordingly, absent such review and approval, all work product intended to receive intellectual property protection must be original work produced by Flynn Hodkinson Workforce members acting within the scope of their employment or engagement and not work product that is produced by a GAI tool.

• Enhanced Periodic Review. As previously noted, the field of Artificial Intelligence is evolving rapidly. Accordingly, this Policy must be reviewed on a more frequent basis than provided in other Flynn Hodkinson policies. FH will maintain an Artificial Intelligence Review Committee and a policy review schedule that is appropriate to the speed at which this technology is evolving and the rate at which AI-related tools are adopted within FH (and our clients and vendors).

Schedule of Approved Generative AI Tools